主要介绍一下 Elasticsearch中 Analyzer 分词器的构成 和一些Es中内置的分词器 以及如何使用它们

es 提供了 analyze api 可以方便我们快速的指定 某个分词器 然后对输入的text文本进行分词 帮助我们学习和实验分词器

POST _analyze

{

"analyzer": "standard",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ the, 2, quick, brown, foxes, jumped, over, the, lazy, dog's, bone ]

在ES中有很重要的一个概念就是 分词,ES的全文检索也是基于分词结合倒排索引做的。所以这一文我们来看下何谓之分词。如何分词。

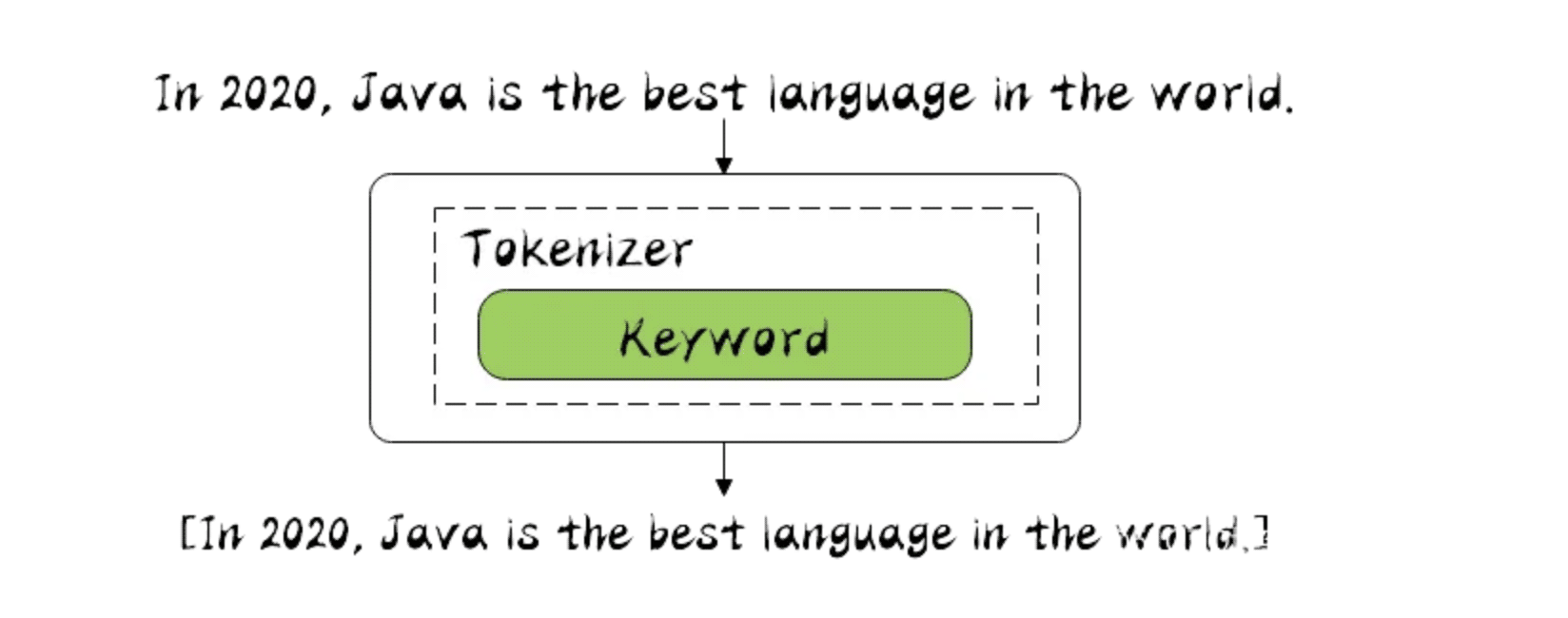

分词器是专门处理分词的组件,在很多中间件设计中每个组件的职责都划分的很清楚,单一职责原则,以后改的时候好扩展。

分词器由三部分组成。

分词场景:

在es中有不少内置分词器

Standard 是es中默认的分词器 , 它是按照 Unicode 文本分割算法去 对文本进行分词的

POST _analyze

{

"analyzer": "standard",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ the, 2, quick, brown, foxes, jumped, over, the, lazy, dog's, bone ]

包括了 转小写的 token filter 和 stop token filter 去除停顿词

Tokenizer

Token Filters

standard token filter currently does nothing. It remains as a placeholder in case some filtering function needs to be added in a future version.)// 使用 自定义的分词器 基于 standard

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_english_analyzer": {

"type": "standard",

"max_token_length": 5, // 最大词数

"stopwords": "_english_" // 开启过滤停顿词 使用 englisth 语法

}

}

}

}

}

GET my_index/_analyze

{

"analyzer": "my_english_analyzer",

"text": "The hellogoodname jack"

}

// 可以看到 最长5个字符 就需要进行分词了, 并且停顿词 the 没有了

["hello", "goodn", "ame", "jack"]

简单的分词器 分词规则就是 遇到 非字母的 就分词, 并且转化为小写,(lowercase tokennizer )

POST _analyze

{

"analyzer": "simple",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ the, quick, brown, foxes, jumped, over, the, lazy, dog, s, bone ]

Tokenizer

无配置参数

simple analyzer 分词器的实现 就是如下

PUT /simple_example

{

"settings": {

"analysis": {

"analyzer": {

"rebuilt_simple": {

"tokenizer": "lowercase",

"filter": [

]

}

}

}

}

}

stop analyzer 和 simple analyzer 一样, 只是多了 过滤 stop word 的 token filter , 并且默认使用 english 停顿词规则

POST _analyze

{

"analyzer": "stop",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

// 可以看到 非字母进行分词 并且转小写 然后 去除了停顿词

[ quick, brown, foxes, jumped, over, lazy, dog, s, bone ]

Tokenizer

Token filters

如下就是对 Stop Analyzer 的实现 , 先转小写 后进行停顿词的过滤

PUT /stop_example

{

"settings": {

"analysis": {

"filter": {

"english_stop": {

"type": "stop",

"stopwords": "_english_"

}

},

"analyzer": {

"rebuilt_stop": {

"tokenizer": "lowercase",

"filter": [

"english_stop"

]

}

}

}

}

}

设置 stopwords 参数 指定过滤的停顿词列表

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_stop_analyzer": {

"type": "stop",

"stopwords": ["the", "over"]

}

}

}

}

}

POST my_index/_analyze

{

"analyzer": "my_stop_analyzer",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ quick, brown, foxes, jumped, lazy, dog, s, bone ]

空格 分词器, 顾名思义 遇到空格就进行分词, 不会转小写

POST _analyze

{

"analyzer": "whitespace",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ The, 2, QUICK, Brown-Foxes, jumped, over, the, lazy, dog's, bone. ]

Tokenizer

无配置

whitespace analyzer 的实现就是如下, 可以根据实际情况进行 添加 filter

PUT /whitespace_example

{

"settings": {

"analysis": {

"analyzer": {

"rebuilt_whitespace": {

"tokenizer": "whitespace",

"filter": [

]

}

}

}

}

}

很特殊 它不会进行分词, 怎么输入 就怎么输出

POST _analyze

{

"analyzer": "keyword",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

//注意 这里并没有进行分词 而是原样输出

[ The 2 QUICK Brown-Foxes jumped over the lazy dog's bone. ]

Tokennizer

无配置

rebuit 如下 就是 Keyword Analyzer 实现

PUT /keyword_example

{

"settings": {

"analysis": {

"analyzer": {

"rebuilt_keyword": {

"tokenizer": "keyword",

"filter": [

]

}

}

}

}

}

正则表达式 进行拆分 ,注意 正则匹配的是 标记, 就是要被分词的标记 默认是 按照 \w+ 正则分词

POST _analyze

{

"analyzer": "pattern",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

// 默认是 按照 \w+ 正则

[ the, 2, quick, brown, foxes, jumped, over, the, lazy, dog, s, bone ]

Tokennizer

Token Filters

| pattern | A Java regular expression, defaults to \W+. |

|---|---|

| flags | Java regular expression. |

| lowercase | 转小写 默认开启 true. |

| stopwords | 停顿词过滤 默认none 未开启 , Defaults to _none_. |

| stopwords_path | 停顿词文件路径 |

Pattern Analyzer 的实现 就是如下

PUT /pattern_example

{

"settings": {

"analysis": {

"tokenizer": {

"split_on_non_word": {

"type": "pattern",

"pattern": "\\W+"

}

},

"analyzer": {

"rebuilt_pattern": {

"tokenizer": "split_on_non_word",

"filter": [

"lowercase"

]

}

}

}

}

}

提供了如下 这么多语言分词器 , 其中 english 也在其中

arabic, armenian, basque, bengali, bulgarian, catalan, czech, dutch, english, finnish, french, galician, german, hindi, hungarian, indonesian, irish, italian, latvian, lithuanian, norwegian, portuguese, romanian, russian, sorani, spanish, swedish, turkish.

GET _analyze

{

"analyzer": "english",

"text": "The 2 QUICK Brown-Foxes jumped over the lazy dog's bone."

}

[ 2, quick, brown, foxes, jumped, over, lazy, dog, bone ]

没啥好说的 就是当提供的 内置分词器不满足你的需求的时候 ,你可以结合 如下3部分

PUT my_index

{

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"char_filter": [

"emoticons"

],

"tokenizer": "punctuation",

"filter": [

"lowercase",

"english_stop"

]

}

},

"tokenizer": {

"punctuation": {

"type": "pattern",

"pattern": "[ .,!?]"

}

},

"char_filter": {

"emoticons": {

"type": "mapping",

"mappings": [

":) => _happy_",

":( => _sad_"

]

}

},

"filter": {

"english_stop": {

"type": "stop",

"stopwords": "_english_"

}

}

}

}

}

POST my_index/_analyze

{

"analyzer": "my_custom_analyzer",

"text": "I'm a :) person, and you?"

}

[ i'm, _happy_, person, you ]

本篇主要介绍了 Elasticsearch 中 的一些 内置的 Analyzer 分词器, 这些内置分词器可能不会常用,但是如果你能好好梳理一下这些内置 分词器,一定会对你理解Analyzer 有很大的帮助, 可以帮助你理解 Character Filters , Tokenizer 和 Token Filters 的用处.

有机会再聊聊 一些中文分词器 如 IKAnalyzer, ICU Analyzer ,Thulac 等等.. 毕竟开发中 中文分词器用到更多些