介绍文章:https:

2016年年末,CoreOs引入了Operator 模式,并发布了Prometheus Operator 作为Operator模式的工作示例。Prometheus Operator自动创建和管理Prometheus监控实例。

Prometheus Operator的任务是使得在Kubernetes运行Prometheus仅可能容易,同时保留可配置性以及使Kubernetes配置原生。

Prometheus Operator使我们的生活更容易——部署和维护。

为了理解这个问题,我们首先需要了解Prometheus Operator得工作原理。

Prometheus Operator架构图.

我们成功部署 Prometheus Operator后可以看到一个新的CRDs(Custom Resource Defination):

Prometheus deployment。Operator根据定义自动创建Prometheusscrape配置。Alertmanager deployment。当服务新版本更新时,将会常见一个新Pod。Prometheus监控k8s API,因此当它检测到这种变化时,它将为这个新服务(pod)创建一组新的配置。

Prometheus Operator使用一个CRD,叫做 ServiceMonitor 将配置抽象到目标。

下面是个ServiceMonitor的示例:

apiVersion: monitoring.coreos.com/v1alpha1

kind: ServiceMonitor

metadata:

name: frontend

labels:

tier: frontend

spec:

selector:

matchLabels:

tier: frontend

endpoints:

- port: web # 指定exporter端口,这里指定的是endpoint的名称

interval: 10s # 刷新间隔时间

这仅仅是定义一组服务应该如何被监控。现在我们需要定义一个包含了该ServiceMonitor的Prometheus实例到其配置:

apiVersion: monitoring.coreos.com/v1alpha1

kind: Prometheus

metadata:

name: prometheus-frontend

labels:

prometheus: frontend

spec:

version: v1.3.0

#定义应包括标签为“tier=frontend”的所有ServiceMonitor 到服务器的配置中

serviceMonitors:

- selector:

matchLabels:

tier: frontend

现在Prometheus将会监控每个带有tier: frontend label的服务。

先决条件:

Helm准备好动手操作:

helm repo add coreos https://s3-eu-west-1.amazonaws.com/coreos-charts/stable/ helm install coreos/prometheus-operator --name prometheus-operator --namespace monitoring

到目前为止,我们已经在我们的集群中安装了Prometheus Operator的TPR。

现在我们来部署Prometheus,Alertmanager和Grafana。

TIP: 当我使用一个庞大的Helm Charts时,我更倾向于创建一个独立的value.yaml文件将包含我所有自定义的变更。这么做使我和同事为后期的变化和修改更容易。

helm install coreos/kube-prometheus --name kube-prometheus \

-f my_changes/prometheus.yaml \

-f my_changes/grafana.yaml \

-f my_changes/alertmanager.yaml

检查一切是否运行正常

kubectl -n monitoring get po NAME READY STATUS RESTARTS AGE alertmanager-kube-prometheus-0 2/2 Running 0 1h kube-prometheus-exporter-kube-state-68dbb4f7c9-tr6rp 2/2 Running 0 1h kube-prometheus-exporter-node-bqcj4 1/1 Running 0 1h kube-prometheus-exporter-node-jmcq2 1/1 Running 0 1h kube-prometheus-exporter-node-qnzsn 1/1 Running 0 1h kube-prometheus-exporter-node-v4wn8 1/1 Running 0 1h kube-prometheus-exporter-node-x5226 1/1 Running 0 1h kube-prometheus-exporter-node-z996c 1/1 Running 0 1h kube-prometheus-grafana-54c96ffc77-tjl6g 2/2 Running 0 1h prometheus-kube-prometheus-0 2/2 Running 0 1h prometheus-operator-1591343780-5vb5q 1/1 Running 0 1h

访问下Prometheus UI看一下Targets页面:

kubectl -n monitoring port-forward prometheus-kube-prometheus-0 9090 Forwarding from 127.0.0.1:9090 -> 9090

浏览器展示如下:

此安装方法本人亲测有效,用到的yaml文件都打包好了。解压之后直接kubectl apply即可用。会自动监控当前集群的所有node节点和pod。只需更改yaml文件中需要用到的镜像。我这里都推到了公司公网harbor仓库。部分镜像已经打成tar包。直接docker load -i即可用。

kube-state.tar.gz

webhook-dingtalk.tar.gz

prometheus-adapter.tar.gz

#软件包集成了node Exporter alertmanager grafana prometheus ingress 所有服务的配置,只需解压到K8S master中。 [root@lecode-k8s-master monitor]# ll total 1820 -rw-r--r-- 1 root root 875 Mar 11 2022 alertmanager-alertmanager.yaml -rw-r--r-- 1 root root 515 Mar 11 2022 alertmanager-podDisruptionBudget.yaml -rw-r--r-- 1 root root 4337 Mar 11 2022 alertmanager-prometheusRule.yaml -rw-r--r-- 1 root root 1483 Mar 14 2022 alertmanager-secret.yaml -rw-r--r-- 1 root root 301 Mar 11 2022 alertmanager-serviceAccount.yaml -rw-r--r-- 1 root root 540 Mar 11 2022 alertmanager-serviceMonitor.yaml -rw-r--r-- 1 root root 614 Mar 11 2022 alertmanager-service.yaml drwxr-x--- 2 root root 4096 Oct 25 13:49 backsvc #这里是grafana的service配置。nodeport模式。用于外部访问。选择使用 -rw-r--r-- 1 root root 278 Mar 11 2022 blackbox-exporter-clusterRoleBinding.yaml -rw-r--r-- 1 root root 287 Mar 11 2022 blackbox-exporter-clusterRole.yaml -rw-r--r-- 1 root root 1392 Mar 11 2022 blackbox-exporter-configuration.yaml -rw-r--r-- 1 root root 3081 Mar 11 2022 blackbox-exporter-deployment.yaml -rw-r--r-- 1 root root 96 Mar 11 2022 blackbox-exporter-serviceAccount.yaml -rw-r--r-- 1 root root 680 Mar 11 2022 blackbox-exporter-serviceMonitor.yaml -rw-r--r-- 1 root root 540 Mar 11 2022 blackbox-exporter-service.yaml -rw-r--r-- 1 root root 2521 Oct 25 13:36 dingtalk-dep.yaml -rw-r--r-- 1 root root 721 Mar 11 2022 grafana-dashboardDatasources.yaml -rw-r--r-- 1 root root 1448347 Mar 11 2022 grafana-dashboardDefinitions.yaml -rw-r--r-- 1 root root 625 Mar 11 2022 grafana-dashboardSources.yaml -rw-r--r-- 1 root root 8098 Mar 11 2022 grafana-deployment.yaml -rw-r--r-- 1 root root 86 Mar 11 2022 grafana-serviceAccount.yaml -rw-r--r-- 1 root root 398 Mar 11 2022 grafana-serviceMonitor.yaml -rw-r--r-- 1 root root 468 Mar 30 2022 grafana-service.yaml drwxr-xr-x 2 root root 4096 Oct 25 13:32 ingress #这里ingress资源也是可以直接用,可以把Prometheus和grafana服务暴露在外部。 -rw-r--r-- 1 root root 2639 Mar 14 2022 kube-prometheus-prometheusRule.yaml -rw-r--r-- 1 root root 3380 Mar 14 2022 kube-prometheus-prometheusRule.yamlbak -rw-r--r-- 1 root root 63531 Mar 11 2022 kubernetes-prometheusRule.yaml -rw-r--r-- 1 root root 6912 Mar 11 2022 kubernetes-serviceMonitorApiserver.yaml -rw-r--r-- 1 root root 425 Mar 11 2022 kubernetes-serviceMonitorCoreDNS.yaml -rw-r--r-- 1 root root 6431 Mar 11 2022 kubernetes-serviceMonitorKubeControllerManager.yaml -rw-r--r-- 1 root root 7629 Mar 11 2022 kubernetes-serviceMonitorKubelet.yaml -rw-r--r-- 1 root root 530 Mar 11 2022 kubernetes-serviceMonitorKubeScheduler.yaml -rw-r--r-- 1 root root 464 Mar 11 2022 kube-state-metrics-clusterRoleBinding.yaml -rw-r--r-- 1 root root 1712 Mar 11 2022 kube-state-metrics-clusterRole.yaml -rw-r--r-- 1 root root 2934 Oct 25 13:40 kube-state-metrics-deployment.yaml -rw-r--r-- 1 root root 3082 Mar 11 2022 kube-state-metrics-prometheusRule.yaml -rw-r--r-- 1 root root 280 Mar 11 2022 kube-state-metrics-serviceAccount.yaml -rw-r--r-- 1 root root 1011 Mar 11 2022 kube-state-metrics-serviceMonitor.yaml -rw-r--r-- 1 root root 580 Mar 11 2022 kube-state-metrics-service.yaml -rw-r--r-- 1 root root 444 Mar 11 2022 node-exporter-clusterRoleBinding.yaml -rw-r--r-- 1 root root 461 Mar 11 2022 node-exporter-clusterRole.yaml -rw-r--r-- 1 root root 3047 Mar 11 2022 node-exporter-daemonset.yaml -rw-r--r-- 1 root root 14356 Apr 11 2022 node-exporter-prometheusRule.yaml -rw-r--r-- 1 root root 270 Mar 11 2022 node-exporter-serviceAccount.yaml -rw-r--r-- 1 root root 850 Mar 11 2022 node-exporter-serviceMonitor.yaml -rw-r--r-- 1 root root 492 Mar 11 2022 node-exporter-service.yaml -rw-r--r-- 1 root root 482 Mar 11 2022 prometheus-adapter-apiService.yaml -rw-r--r-- 1 root root 576 Mar 11 2022 prometheus-adapter-clusterRoleAggregatedMetricsReader.yaml -rw-r--r-- 1 root root 494 Mar 11 2022 prometheus-adapter-clusterRoleBindingDelegator.yaml -rw-r--r-- 1 root root 471 Mar 11 2022 prometheus-adapter-clusterRoleBinding.yaml -rw-r--r-- 1 root root 378 Mar 11 2022 prometheus-adapter-clusterRoleServerResources.yaml -rw-r--r-- 1 root root 409 Mar 11 2022 prometheus-adapter-clusterRole.yaml -rw-r--r-- 1 root root 2204 Mar 11 2022 prometheus-adapter-configMap.yaml -rw-r--r-- 1 root root 2530 Oct 25 13:39 prometheus-adapter-deployment.yaml -rw-r--r-- 1 root root 506 Mar 11 2022 prometheus-adapter-podDisruptionBudget.yaml -rw-r--r-- 1 root root 515 Mar 11 2022 prometheus-adapter-roleBindingAuthReader.yaml -rw-r--r-- 1 root root 287 Mar 11 2022 prometheus-adapter-serviceAccount.yaml -rw-r--r-- 1 root root 677 Mar 11 2022 prometheus-adapter-serviceMonitor.yaml -rw-r--r-- 1 root root 501 Mar 11 2022 prometheus-adapter-service.yaml -rw-r--r-- 1 root root 447 Mar 11 2022 prometheus-clusterRoleBinding.yaml -rw-r--r-- 1 root root 394 Mar 11 2022 prometheus-clusterRole.yaml -rw-r--r-- 1 root root 5000 Mar 11 2022 prometheus-operator-prometheusRule.yaml -rw-r--r-- 1 root root 715 Mar 11 2022 prometheus-operator-serviceMonitor.yaml -rw-r--r-- 1 root root 499 Mar 11 2022 prometheus-podDisruptionBudget.yaml -rw-r--r-- 1 root root 14021 Mar 11 2022 prometheus-prometheusRule.yaml -rw-r--r-- 1 root root 1184 Mar 11 2022 prometheus-prometheus.yaml -rw-r--r-- 1 root root 471 Mar 11 2022 prometheus-roleBindingConfig.yaml -rw-r--r-- 1 root root 1547 Mar 11 2022 prometheus-roleBindingSpecificNamespaces.yaml -rw-r--r-- 1 root root 366 Mar 11 2022 prometheus-roleConfig.yaml -rw-r--r-- 1 root root 2047 Mar 11 2022 prometheus-roleSpecificNamespaces.yaml -rw-r--r-- 1 root root 271 Mar 11 2022 prometheus-serviceAccount.yaml -rw-r--r-- 1 root root 531 Mar 11 2022 prometheus-serviceMonitor.yaml -rw-r--r-- 1 root root 558 Mar 11 2022 prometheus-service.yaml drw-r--r-- 2 root root 4096 Oct 24 12:31 setup #先apply setup目录中的yaml文件。然后apply一级目录下的yaml文件。backsvc中的grafana的service资源清单。根据情况调整为nodeport或ClusterIP。K8S集群会自动在每台K8S节点部署node-exporter并收集数据。登录grafana后初始账号密码为admin admin。添加dashboard即可监控K8S集群 [root@lecode-k8s-master monitor]# cd setup/ [root@lecode-k8s-master setup]# kubectl apply -f . [root@lecode-k8s-master setup]# cd .. [root@lecode-k8s-master monitor]# kubectl apply -f . [root@lecode-k8s-master monitor]# kubectl get po -n monitoring NAME READY STATUS RESTARTS AGE alertmanager-main-0 2/2 Running 0 74m alertmanager-main-1 2/2 Running 0 74m alertmanager-main-2 2/2 Running 0 74m blackbox-exporter-6798fb5bb4-d9m7m 3/3 Running 0 74m grafana-64668d8465-x7x9z 1/1 Running 0 74m kube-state-metrics-569d89897b-hlqxj 3/3 Running 0 57m node-exporter-6vqxg 2/2 Running 0 74m node-exporter-7dxh6 2/2 Running 0 74m node-exporter-9j5xk 2/2 Running 0 74m node-exporter-ftrmn 2/2 Running 0 74m node-exporter-qszkn 2/2 Running 0 74m node-exporter-wjkgj 2/2 Running 0 74m prometheus-adapter-5dd78c75c6-h2jf7 1/1 Running 0 58m prometheus-adapter-5dd78c75c6-qpwzv 1/1 Running 0 58m prometheus-k8s-0 2/2 Running 0 74m prometheus-k8s-1 2/2 Running 0 74m prometheus-operator-75d9b475d9-mmzgs 2/2 Running 0 80m webhook-dingtalk-6ffc94b49-z9z6l 1/1 Running 0 61m [root@lecode-k8s-master backsvc]# kubectl get svc -n monitoring NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE alertmanager-main NodePort 10.98.35.93 <none> 9093:30093/TCP 72m alertmanager-operated ClusterIP None <none> 9093/TCP,9094/TCP,9094/UDP 72m blackbox-exporter ClusterIP 10.109.10.110 <none> 9115/TCP,19115/TCP 72m grafana NodePort 10.110.48.214 <none> 3000:30300/TCP 72m kube-state-metrics ClusterIP None <none> 8443/TCP,9443/TCP 72m node-exporter ClusterIP None <none> 9100/TCP 72m prometheus-adapter ClusterIP 10.97.23.176 <none> 443/TCP 72m prometheus-k8s ClusterIP 10.100.92.254 <none> 9090/TCP 72m prometheus-operated ClusterIP None <none> 9090/TCP 72m prometheus-operator ClusterIP None <none> 8443/TCP 78m webhook-dingtalk ClusterIP 10.100.131.63 <none> 80/TCP 72m

暴露服务三种方法:用service资源的nodeport模式,或者用k8s的ingress暴露服务或者本地nginx代理。本地的nginx代理模式

这里我grafana用的是nodeport模式。Prometheus用的是nginx代理。

附上nginx配置文件

[root@lecode-k8s-master setup]# cat /usr/local/nginx/conf/4-layer-conf.d/lecode-prometheus-operator.conf

#代理prometheus内置Dashboard UI

upstream prometheus-dashboard {

server 10.100.92.254:9090; #这里ip为prometheus-k8s svc资源的ip

}

server {

listen 9090;

proxy_pass prometheus-dashboard;

}

#代理grafana

upstream grafana {

server 10.1.82.89:3000; #这里ip为grafana svc资源的ip

}

server {

listen 3000;

proxy_pass grafana;

}

访问Prometheus targets

访问grafana(默认密码是admin admin)

去grafana官网下载对应dashboard 地址:https://grafana.com/grafana/dashboards/

在对应服务的本地安装对应的exporter用于收集数据(这里以mysql为例)

#下载对应服务的exporter

#插件下载地址:https://www.modb.pro/db/216588

#插件下载地址:http://prometheus.io/download/

#下载完成后解压mysqld_exporter-0.13.0.linux-amd64.tar.gz

#配置mysql-exporter

在root路径下,创建.my.cnf文件。内容如下:

[root@lecode-test-001 ~]# cat /root/.my.cnf

[client]

user=mysql_monitor

password=Mysql@123

#创建mysql 用户并授权

CREATE USER ‘mysql_monitor'@‘localhost' IDENTIFIED BY ‘Mysql@123' WITH MAX_USER_CONNECTIONS 3;

GRANT PROCESS, REPLICATION CLIENT, SELECT ON . TO ‘mysql_monitor'@‘localhost';

FLUSH PRIVILEGES;

EXIT

#启动mysqld_exporter

[root@lecode-test-001 mysql-exporter]# nohup mysqld_exporter &

#找到对应的端口

[root@lecode-test-001 mysql-exporter]# tail -f nohup.out

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:277 msg="Starting msqyld_exporter" version="(version=0.13.0, branch=HEAD, revision=ad2847c7fa67b9debafccd5a08bacb12fc9031f1)"

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:278 msg="Build context" (gogo1.16.4,userroot@e2043849cb1f,date20210531-07:30:16)=(MISSING)

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=global_status

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=global_variables

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=slave_status

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=info_schema.innodb_cmp

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=info_schema.innodb_cmpmem

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:293 msg="Scraper enabled" scraper=info_schema.query_response_time

level=info ts=2022-10-25T09:26:54.464Z caller=mysqld_exporter.go:303 msg="Listening on address" address=:9104 #这是exporter的端口

level=info ts=2022-10-25T09:26:54.464Z caller=tls_config.go:191 msg="TLS is disabled." http2=false

#检查端口

[root@lecode-test-001 mysql-exporter]# ss -lntup |grep 9104

tcp LISTEN 0 128 :::9104 :::* users:(("mysqld_exporter",pid=26115,fd=3))

创建endpoint资源关联对应服务主机的exporter端口。绑定service资源,通过ServiceMonitor资源添加Prometheus targets,

kubectl -n monitoring get prometheus kube-prometheus -o yaml

apiVersion: monitoring.coreos.com/v1

kind: Prometheus

metadata:

labels:

app: prometheus

chart: prometheus-0.0.14

heritage: Tiller

prometheus: kube-prometheus

release: kube-prometheus

name: kube-prometheus

namespace: monitoring

spec:

...

baseImage: quay.io/prometheus/prometheus

serviceMonitorSelector:

matchLabels:

prometheus: kube-prometheus

#接下来就是按照格式创建对应的ServiceMonitor资源

通过ep资源把外部服务关联到K8S内部,绑定对应的svc资源。在由serviceMonitor绑定对应的svc资源把数据提交给Prometheus,serviceMonitor通过标签选择器关联service,而service只需要通过端口关联ep,这里的标签和端口一定要注意一致.

[root@lecode-k8s-master monitor]# cat mysql.yaml

apiVersion: v1

kind: Endpoints

metadata:

name: mysql-test

namespace: monitoring

subsets:

- addresses:

- ip: 192.168.1.17 # ip为安装应用服务器的ip

ports:

- name: mysql

port: 9104 #export的端口

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: mysql

app.kubernetes.io/name: mysql

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: mysql-test

namespace: monitoring

spec:

clusterIP: None

clusterIPs:

- None

ports:

- name: mysql

port: 9104

protocol: TCP

sessionAffinity: None

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app.kubernetes.io/component: mysql

app.kubernetes.io/name: mysql

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: mysql-test

namespace: monitoring

spec:

endpoints:

- bearerTokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

interval: 30s

port: mysql

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/component: mysql

app.kubernetes.io/name: mysql

app.kubernetes.io/part-of: kube-prometheus

#创建

[root@lecode-k8s-master monitor]# kubectl apply -f mysql.yaml

endpoints/mysql-test created

service/mysql-test created

servicemonitor.monitoring.coreos.com/mysql-test created

#检查

[root@lecode-k8s-master monitor]# kubectl get -f mysql.yaml

NAME ENDPOINTS AGE

endpoints/mysql-test 192.168.1.17:9104 10m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/mysql-test ClusterIP None <none> 9104/TCP 10m

NAME AGE

servicemonitor.monitoring.coreos.com/mysql-test 10m

#部署redis-exporter #插件下载地址:https://www.modb.pro/db/216588 [root@lecode-test-001 ~]# tar xf redis_exporter-v1.3.2.linux-amd64.tar.gz [root@lecode-test-001 ~]# ll drwxr-xr-x 2 root root 4096 Nov 6 2019 redis_exporter-v1.3.2.linux-amd64 -rw-r--r-- 1 root root 3376155 Oct 27 10:26 redis_exporter-v1.3.2.linux-amd64.tar.gz [root@lecode-test-001 ~]# mv redis_exporter-v1.3.2.linux-amd64 redis_exporter [root@lecode-test-001 ~]# cd redis_exporter [root@lecode-test-001 redis_exporter]# ll total 8488 -rw-r--r-- 1 root root 1063 Nov 6 2019 LICENSE -rw-r--r-- 1 root root 10284 Nov 6 2019 README.md -rwxr-xr-x 1 root root 8675328 Nov 6 2019 redis_exporter [root@lecode-test-001 redis_exporter]# nohup ./redis_exporter -redis.addr 192.168.1.17:6379 -redis.password 'Redislecodetest@shuli123' & [1] 4564 [root@lecode-test-001 redis_exporter]# nohup: ignoring input and appending output to ‘nohup.out’ [root@lecode-test-001 redis_exporter]# tail -f nohup.out time="2022-10-27T10:26:48+08:00" level=info msg="Redis Metrics Exporter v1.3.2 build date: 2019-11-06-02:25:20 sha1: 175a69f33e8267e0a0ba47caab488db5e83a592e Go: go1.13.4 GOOS: linux GOARCH: amd64" time="2022-10-27T10:26:48+08:00" level=info msg="Providing metrics at :9121/metrics" #端口为9121

#创建redis-serviceMonitor资源

[root@lecode-k8s-master monitor]# cat redis.yaml

apiVersion: v1

kind: Endpoints

metadata:

name: redis-test

namespace: monitoring

subsets:

- addresses:

- ip: 192.168.1.17 # ip为安装应用服务器的ip

ports:

- name: redis

port: 9121 #exporter端口

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: redis

app.kubernetes.io/name: redis

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: redis-test

namespace: monitoring

spec:

clusterIP: None

clusterIPs:

- None

ports:

- name: redis

port: 9121

protocol: TCP

sessionAffinity: None

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app.kubernetes.io/component: redis

app.kubernetes.io/name: redis

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: redis-test

namespace: monitoring

spec:

endpoints:

- bearerTokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

interval: 30s

port: redis

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/component: redis

app.kubernetes.io/name: redis

app.kubernetes.io/part-of: kube-prometheus

#创建资源

[root@lecode-k8s-master monitor]# kubectl apply -f redis.yaml

endpoints/redis-test created

service/redis-test created

servicemonitor.monitoring.coreos.com/redis-test created

[root@lecode-k8s-master monitor]# kubectl get ep,svc,serviceMonitor -n monitoring |grep redis

endpoints/redis-test 192.168.1.17:9121 6m2s

service/redis-test ClusterIP None <none> 9121/TCP 6m2s

servicemonitor.monitoring.coreos.com/redis-test 6m2s

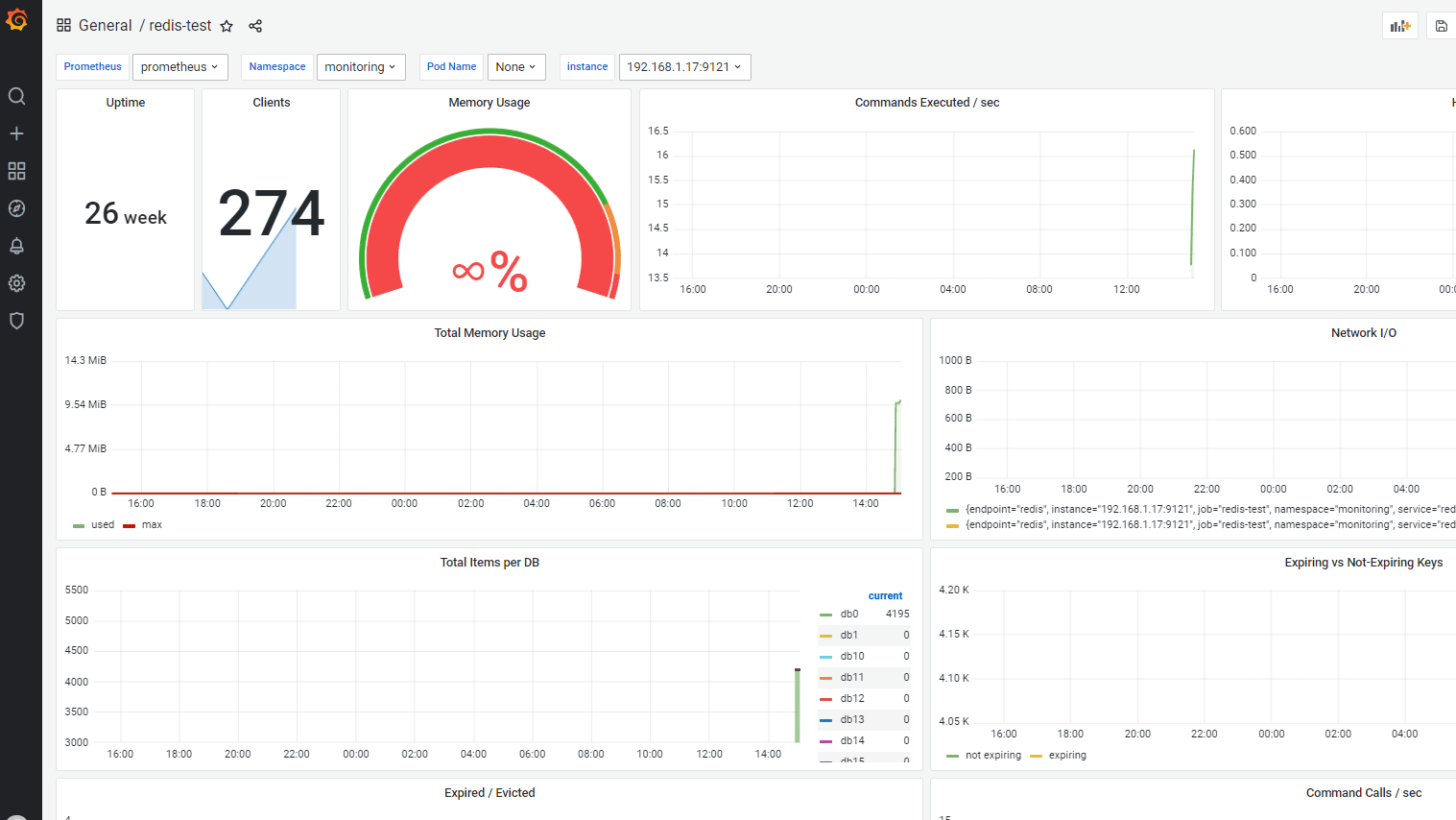

dashboard ID: 11835

#插件下载地址:https://www.modb.pro/db/216588 [root@lecode-test-001 ~]# tar xf kafka_exporter-1.4.2.linux-amd64.tar.gz [root@lecode-test-001 ~]# ll drwxrwxr-x 2 2000 2000 4096 Sep 16 2021 kafka_exporter-1.4.2.linux-amd64 -rw-r--r-- 1 root root 8499720 Oct 27 15:30 kafka_exporter-1.4.2.linux-amd64.tar.gz [root@lecode-test-001 ~]# mv kafka_exporter-1.4.2.linux-amd64 kafka_exporter [root@lecode-test-001 ~]# cd kafka_exporter [root@lecode-test-001 kafka_exporter]# ll total 17676 -rwxr-xr-x 1 2000 2000 18086208 Sep 16 2021 kafka_exporter -rw-rw-r-- 1 2000 2000 11357 Sep 16 2021 LICENSE [root@lecode-test-001 kafka_exporter]# nohup ./kafka_exporter --kafka.server=192.168.1.17:9092 & [1] 20777 [root@lecode-test-001 kafka_exporter]# nohup: ignoring input and appending output to ‘nohup.out' [root@lecode-test-001 kafka_exporter]# tail -f nohup.out I1027 15:32:38.904075 20777 kafka_exporter.go:769] Starting kafka_exporter (version=1.4.2, branch=HEAD, revision=0d5d4ac4ba63948748cc2c53b35ed95c310cd6f2) I1027 15:32:38.905515 20777 kafka_exporter.go:929] Listening on HTTP :9308 #exporter端口为9308

[root@lecode-k8s-master monitor]# cat kafka.yaml

apiVersion: v1

kind: Endpoints

metadata:

name: kafka-test

namespace: monitoring

subsets:

- addresses:

- ip: 192.168.1.17 # ip为安装应用服务器的ip

ports:

- name: kafka

port: 9308 #export的端口

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: kafka

app.kubernetes.io/name: kafka

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: kafka-test

namespace: monitoring

spec:

clusterIP: None

clusterIPs:

- None

ports:

- name: kafka

port: 9308

protocol: TCP

sessionAffinity: None

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app.kubernetes.io/component: kafka

app.kubernetes.io/name: kafka

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: kafka-test

namespace: monitoring

spec:

endpoints:

- bearerTokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

interval: 30s

port: kafka

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/component: kafka

app.kubernetes.io/name: kafka

app.kubernetes.io/part-of: kube-prometheus

#创建

[root@lecode-k8s-master monitor]# kubectl apply -f kafka.yaml

endpoints/kafka-test created

service/kafka-test created

servicemonitor.monitoring.coreos.com/kafka-test created

[root@lecode-k8s-master monitor]# kubectl get -f kafka.yaml

NAME ENDPOINTS AGE

endpoints/kafka-test 192.168.1.17:9308 8m49s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kafka-test ClusterIP None <none> 9308/TCP 8m49s

NAME AGE

servicemonitor.monitoring.coreos.com/kafka-test 8m48s

dashboard ID:7589

exporter下载地址:https://github.com/carlpett/zookeeper_exporter/releases/download/v1.0.2/zookeeper_exporter [root@lecode-test-001 zookeeper_exporter]# nohup ./zookeeper_exporter -zookeeper 192.168.1.17:2181 -bind-addr :9143 & [2] 8310 [root@lecode-test-001 zookeeper_exporter]# nohup: ignoring input and appending output to ‘nohup.out' [root@lecode-test-001 zookeeper_exporter]# tail -f nohup.out time="2022-10-27T15:58:27+08:00" level=info msg="zookeeper_exporter, version v1.0.2 (branch: HEAD, revision: d6e929223f6b3bf5ff25dd0340e8194cbd4d04fc)\n build user: @bd731f434d23\n build date: 2018-05-01T20:40:14+0000\n go version: go1.10.1" time="2022-10-27T15:58:27+08:00" level=info msg="Starting zookeeper_exporter" time="2022-10-27T15:58:27+08:00" level=info msg="Starting metric http endpoint on :9143" #exporter端口为9143

[root@lecode-k8s-master monitor]# cat zookeeper.yaml

apiVersion: v1

kind: Endpoints

metadata:

name: zookeeper-test

namespace: monitoring

subsets:

- addresses:

- ip: 192.168.1.17

ports:

- name: zookeeper

port: 9143

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: zookeeper

app.kubernetes.io/name: zookeeper

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: zookeeper-test

namespace: monitoring

spec:

clusterIP: None

clusterIPs:

- None

ports:

- name: zookeeper

port: 9143

protocol: TCP

sessionAffinity: None

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app.kubernetes.io/component: zookeeper

app.kubernetes.io/name: zookeeper

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.49.0

name: zookeeper-test

namespace: monitoring

spec:

endpoints:

- bearerTokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

interval: 30s

port: zookeeper

tlsConfig:

insecureSkipVerify: true

selector:

matchLabels:

app.kubernetes.io/component: zookeeper

app.kubernetes.io/name: zookeeper

app.kubernetes.io/part-of: kube-prometheus

#创建

[root@lecode-k8s-master monitor]# kubectl apply -f zookeeper.yaml

endpoints/zookeeper-test created

service/zookeeper-test created

servicemonitor.monitoring.coreos.com/zookeeper-test created

[root@lecode-k8s-master monitor]# kubectl get -f zookeeper.yaml

NAME ENDPOINTS AGE

endpoints/zookeeper-test 192.168.1.17:9143 9m55s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/zookeeper-test ClusterIP None <none> 9143/TCP 9m55s

NAME AGE

servicemonitor.monitoring.coreos.com/zookeeper-test 9m55s

dashboard ID:15026